Have you heard about this AI-powered crypto block-chain NFT start-up that uses LLMs to disrupt the pop-corn industry? Yeah, me too. 🤨

The goal of this article is to give you a better sense of what is what in AI, where things begin and end. Definitions often rely on arbitrarily choosing where things start and end. But in AI as in Nature, things may be fuzzy and overlap. Unless cited, the nomenclatures and definitions below are my own.

Artificial Intelligence, a recipe for success!

I like Alan Turing’s definition for Artificial Intelligence for its simplicity:

Artificial Intelligence is a decision made by a computer, which by its smartness, is indistinguishable from a human-made decision.

People often claim that AI is “ill-defined”, because Alan Turing’s definition is very broad. I think it seems ill-defined because it forces us to confront our ignorance about human consciousness and intelligence, and it is also easy to confuse thinking (in the sense of cognition, not emotion) and computing.

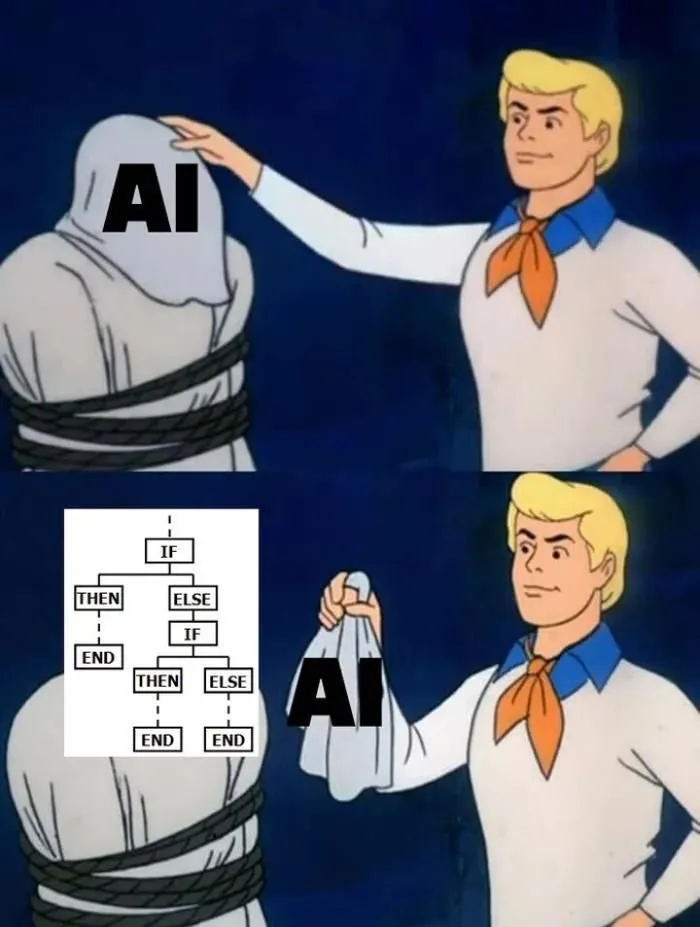

At the essence of it, what is a decision made by a machine? According to Alan Turing’s definition, technically, a clever macro in Excel would be an AI. Well, yes. A set of rules, like “if this: do that; else: do that other thing”, if executed by a computer, is an AI.

Generating those rules can be very difficult and/or expensive. Nobody wants to sit at a computer, typing every possible scenario and defining the action in each case. So AI is not so much about the decision taken, but how the decision was reached. How can we learn to generate those rules automatically, at scale, no matter the situation? That is what the field of AI is concerned about.

What is an AI?

Concretely, for a computer to take a decision, it needs to follow a computer program, a set of instruction. This set of instruction is called an algorithm. Think of it as a recipe.

Let’s cook an algorithm together! 👩🍳

Consider a recipe for a cake, and let’s write it down mathematically as f(). Your recipe f() takes some ingredients (which we will call input): f(flour, butter, milk, egg). So your recipe (algorithm) f() tells you what to do with your ingredients (inputs). You mix them, put them in a mold, into the oven at some temperature for some time, et voilà! 🍰! The cake is the output, let’s note it y.

An algorithm is a set of instruction f(), which once applied to your input x, will output y.

f(x) = y

The whole point of AI is figuring out how to create f() such that when you give it your input x, you get what you want as y.

Tasks, Tools, and the rule of Three

To sort out the buzzword salad of AI, I like to divide things this way:

- What can AI do: The type of tasks AI can do.

- How can AI do it: The type of (mathematical) tools AI can use.

- Where can AI do it: The domains of application of AI.

Let’s dive in! 🏊♀️

A note: The following nomenclature is my own way of explaining an enormous field. It is meant as an comprehensive but not exhaustive introduction.

What can AI do?

Artificial Intelligence, in essence, is a large collection of algorithms, grouped in families, and each family does very specific things. What are those specific things?

- Machine Learning: What group does this

xbelong to?Yis the group. - Data Mining: What patterns exist in this data

x?Yis the pattern. - Reinforcement Learning: What is the next best action given where I am (

x)?Yis the action you probably should take. - Optimization: What’s the best solution given problem

x?Yis the solution.

Machine Learning

What is the difference between Machine Learning (ML) and AI? ML is a sub-family of algorithms within the larger AI domain, and focuses on grouping things. As an example, let’s look at a collection of photos. Some photos have muffins on them, and some photos have dogs. We want to automatically group the photos, somehow.

Grouping when you know the groups

If some photos are already labelled based on 🧁 or 🐶, then we already know what group exists (muffin and dog). The algorithm will learn from the labelled data what is a 🧁 or 🐶, and continue labeling the rest of the data. This is called classification, where the class is the group. It is also called supervised learning , because the learning is “supervised” by the existing labels.

Grouping when you don’t know the groups

If none of the photos are labeled, then this is unsupervised learning. In this case, the algorithm will group (or cluster) things based on how similar they are. This is also called clustering.

ML & Prediction

A supervised or unsupervised algorithm doesn’t output 🧁 or 🐶, but rather a probability that an image has 🧁 or 🐶 on it. This probability is what is used when predicting things like customer churn (In this case, the groups are: customer churns, customer renews).

Sometimes, it’s hard to tell 🧁 or 🐶!

Data Mining

The apocryphal origin of Data Mining tells the story of a retail chain analyzing their transaction receipts with the Apriori algorithm (my 2nd favorite algorithm), and discovering a correlation between beer and diapers on sunday afternoons. The story goes that new dads, watching the football game on Sunday afternoon, were sent to the store to resupply on diapers, and thinking about the game, resupplied on beer as well. The store put the diapers next to the beer, and sales increased.

While this story is probably not true, you have very likely heard of Target sending coupon to a pregnant teen before she knew she was pregnant. That, my dear reader, is Data Mining 🤣.

Those two stories are hinting at the difference with Machine Learning. Both ML and Data Mining are looking for patterns, but those patterns don’t have the same structure. What grouping would be the most relevant among millions of receipts? Thinking of the problem another way, finding association of items that repeats sufficiently to be noticeable, that is a pattern! In essence, this is Data Mining: finding patterns that repeat 🧩.

Reinforcement Learning

Reinforcement Learning algorithms focus on finding the best next action, when the problem is a sequential decision making problem. For instance, you are playing chess. What’s the next best move for you to do?

RL used to be the poor child of AI, because it takes a lot computational power to calculate. Each next step has a number of possibilities, which increase exponentially with step, and makes the future very hard (computationally expensive) to predict. Recent progress in optimization (i.e., how to do things more efficiently) has given rise to actual applications of RL, like AlphaGo.

A side note about a pet peeve of mine. Wikipedia claims that RL is “an area of Machine Learning”. I disagree with this assertion. Clustering and classification are different tasks altogether than sequential decision making. While there are some overlaps in algorithm/task/data between ML and Data Mining, ML and RL differ greatly in the type of algorithms AND the type of tasks AND the type of data they use. Nomenclatures are arbitrary, and this is a hill with 0-feet altitude, but I am a nerd and I will die on it!

Optimization

Last, but certainly not least, Optimization is an heteroclite family of algorithms, that given a problem, will output (sometimes approximately) the best solution. What does that mean? The algorithm used by your GPS to find the best way home is an optimization algorithm. The PageRank algorithm used by Google to find the best answer to your search question is an optimization algorithm.

Not everything is a nail

You might have noticed that for some real-world problems, there are different algorithms that could provide a solution. Let’s say we want to program a robot to autonomously navigate a maze without knowing the layout of the maze. We could use some optimization algorithms (map the maze and find the best way out), or ML (is this a wall or is this a corridor?), or RL (what’s the next best step given all the steps we have already taken and what I see in front of me). Each algorithm is a different tool, and not everything is a nail. Yes, you could use a hammer on a screw, and you will probably succeed. But was it the easiest and cheapest way to do it? Probably not.

When looking for AI experts and practitioners, look for breadth as well as depth, because the world is a vast place, filled with an infinity of fasteners! 🔨 🔧 🪛

How does AI do it?

Let’s recap. ☕.

The field of Artificial intelligence can be described by grouping algorithms per task. It can also be described using the mathematical tools used by the algorithms to derive their conclusion. Going back to the cake example, I could cook a cake in an oven, in a microwave, in a grill. Not every technique is the best for the task, but it works!

And you already know many AI tools! Deep Learning, Neural Networks, graphs, Bayesian network, linear regression, transformers, tensors…

Without going into technical details for each of them, all of them are a way to conceive the world mathematically. Each algorithm has a framework, a way of “seeing” the world. In the Apriori algorithm, mythically used to derive that beer and diapers have a lot in common, the world begins and ends with two things: the sets (the receipts), and the items in each set (the goods purchased in each receipt). From this abstraction, the apriori algorithm applies its rules, and calculates the most pertinent association of items. A similar result could be achieved with a different algorithm, which represents this problem in a totally different way (e.g., with a bipartite graph).

An AI is always limited by the framework (the mathematical or statistical tool) it uses. It cannot see beyond it. It cannot go beyond it. But a problem (a task) can be represented in many different ways, and thus be solved in many different ways, some better (accuracy, efficiency, etc.) than others.

On a side note, thinking about how problem A is actually problem B if you change its framework (such that we can use an algorithm that we know work well on problem B) is called Reduction. It is a fascinating branch of computational complexity theory. It’s not always about what an algorithm can do, but also if it can do it in a reasonable amount of time (usually before the cooling of the universe 🥶).

It is the living embodiment of “thinking outside the 📦”.

Where can AI do it?

You made it this far, and good news, it’s only the easy stuff left to talk about! 😇 AI or algorithms can be applied to anything. Let’s look at some application fields which you probably have encountered:

- Computer Vision: Algorithms for image recognition, like the ones who detect faces on pictures.

- Natural Language Processing (NLP): Algorithms for text/speech recognition, like “Hey Siri”. It also includes summarizing text, sentiment analysis, topic detection.

- Autonomous robots: Algorithms for robotic automation, including self-driving cars, or delivery robots.

- Recommendation systems: Netflix, your social network feeds, Amazon recommending products. Those are everywhere.

- Search: Google Search, Bing.

- Data Science: I am carefully putting Data Science as a domain of application. It is not a task nor a tool, but rather an industry term to describe data analysis and applied Machine Learning or Data Mining.

- FinTech: Algorithms have been used for a very long time in finance, and the improvement in hardware and software has unleashed a whole new area where “quants” are replaced by data scientists.

- EdTech: DuoLingo, Khan Academy, Knewton have all developed algorithms for teaching.

Pick a field, and there is someone, somewhere, using AI. We can use AI optimizations to fold protein and discover new medicine, advance data processing in physics, or (try to) improve healthcare.

Where does AI go from there?

I have been careful not to mention GenerativeAI (genAI) so far 😇. Where does genAI fit in my nomenclature of tasks/tools/applications? GenAI is the family of algorithms that can generate things (y) given an input (often a prompt, x), so it is a task. If I ask a genAI algorithm to generate a picture of a muffin, it needs to “know” what is a muffin. In its dataset, there needs to be pictures of a muffin, labeled as 🧁. In that sense, genAI coalesces all the other algorithm families into one big ensemble of algorithms.

Generative AI is where (most of) the field of Artificial Intelligence is going, transcending its parts into a great complex machine. A machine nonetheless.